Tesla News: Musk Tweets Details on Fundamental Autopilot Rewrite

There is so much going on with Tesla, especially their Autopilot and Full Self Driving, that you would be forgiven for not keeping up with the details. Elon Musk recently Tweeted about how the company has been performing a fundamental re-write to the code behind their self-driving AI. It should mean a big leap forward for the future of commuting.

Two predominate mindsets are at play with self-driving AI, one is lidar-based, the other is visual. Both have their merits, but currently have a little ways to go before most people will trust them to drive their kids to school.

With lidar, you see companies like Waymo (and Volvo) using very precise lasers to create centimetre-accurate maps of routes. Later, cars drive on “rails” on that mapped route. Using sensors, usually lidar, they make sure they don’t mow anything down in their path.

That’s great, as lidar is superhuman, allowing it to see under cars and around obstacles. It’s also currently very expensive.

The visual approach, which Tesla has championed, acts more like a human driver. It has a GPS (which fortunately it doesn’t have to look away from the road to check) and a plethora of cameras.

Tesla’s AI-Head, Andrej Karpathy, gave a keynote about this recently, which I’ll put below.

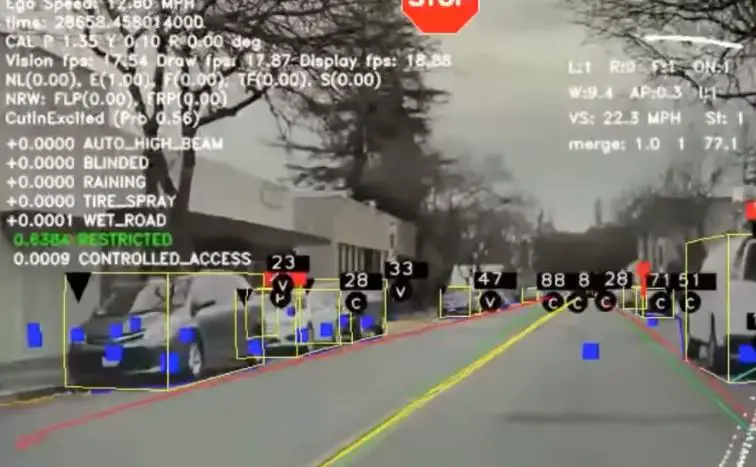

An AI is trained to recognize common objects on the roadway via these cameras, and react accordingly. With every logged incident, the system gets better for the whole fleet, and over time the cameras are trained to not only have super-human attentiveness, but also vision in all directions.

Where humans have two eyes that allow for 3D vision, cars can have many cameras facing in all directions. The current problem is that Tesla hasn’t always trained their cameras to see the world in 3D. It’s a collection of 2D feeds, like a wall of security cameras. Adding stereoscopic depth perception to the equation, would significantly upgrade the system. If you’d like a demonstration, buy an eye-patch.

Now Tesla has nearly finished with doing just that, rewriting their AI to see the world in 3D, as well as in many angles. That will make identifying moving objects much easier and more accurate, as well as give it the extra edge in spotting objects like road signs.

https://twitter.com/elonmusk/status/1278539278356791298?s=20

Teslas currently have a huge lead on the competition, despite taking the longer and more difficult visual approach. Their massive lead in miles driven, thanks to a more expansive fleet of contributing consumer vehicles, will now be applied retro-actively to a new, superior, 3D system.

Musk says that the 3D visualization update is only “2-4 months” away. Take that with a grain of salt, because he also said there would be 1 million robo-taxis by the end of this year. Regardless, as Tesla has pushed out the ability to follow lead cars through traffic lights to early-access, assume this year will see Full Self Driving, 3D or not.

https://twitter.com/elonmusk/status/1278537592569462784?s=20

When this 3D FSD does finally make its way into cars, that will be the time for an all-out regulatory discussion, that can herald in the robo-taxis, which are running behind schedule.